Shuchang Xu

Clear Water Bay, Hong Kong

About Me

I’m a PhD Candidate in Computer Science at Hong Kong University of Science and Technology, advised by Prof. Huamin Qu at VisLab. I’m currently a visiting student at MIT Media Lab, working with Prof. Pattie Maes at the Fluid Interfaces Group. My research focuses on developing AI-powered assistive systems that augment human perception and cognition, with a focus on enhancing accessibility for blind and aging populations. Before my PhD studies, I earned both my bachelor’s and master’s degrees from Tsinghua University, including an M.S. in Computer Science (advised by Prof. Yuanchun Shi), a B.E. in Electrical Engineering, and a B.A. in Digital Media Art. I also spent two years as a software product manager at Xiaomi Corporation.

News

- [Apr 2026] I’ve started my visiting program at MIT Media Lab. Excited to connect with you!

- [Mar 2026] Check out our online demo for Sonic Stage, a system that augments spatial awareness for blind users in watching film and television.

- [Feb 2026] One collaborative paper and one poster have been accepted to CHI 2026.

- [Sep 2025] Our collaborative work NeuroSync received the Best Paper Honorable Mention Award at UIST 2025🏅.

- [July 2025] Three full papers have been accepted to UIST 2025: one first-author paper on 360° video accessibility and two collaborative papers on human-AI collaboration.

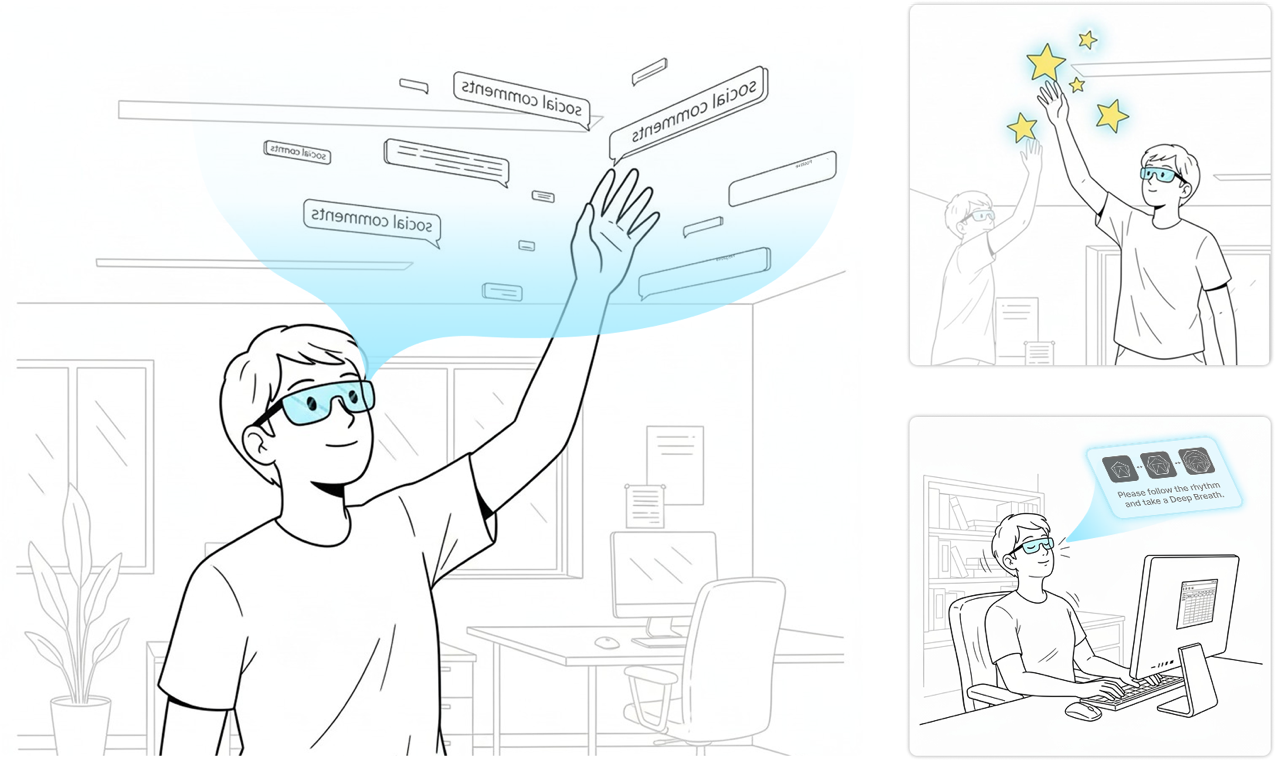

- [April 2025] Our work DanmuA11y received the Best Paper Honorable Mention Award at CHI 2025🏅.

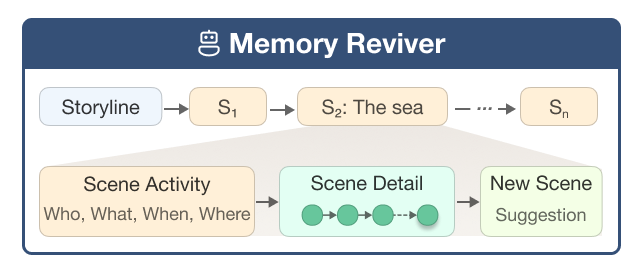

- [August 2024] Our work Memory Reviver has been accepted by UIST 2024.

Research Overview

My research centers on developing AI-powered assitive systems that augment human perception and cognition for blind and aging populations. I pursue this vision through three core areas:

1. Personalized Memory Agents for Reminiscence: I develop personal memory agents that help users relive meaningful memories across diverse settings: (1) independent reminiscence with proactive chatbots (UIST 2024), (2) collaborative reminiscence with therapist-in-the-loop support in dementia care, and (3) everyday reminiscence support integrated into daily routines. These systems incorporate cognitive science knowledge into AI agents to make reminiscence emotionally resonant and engaging.

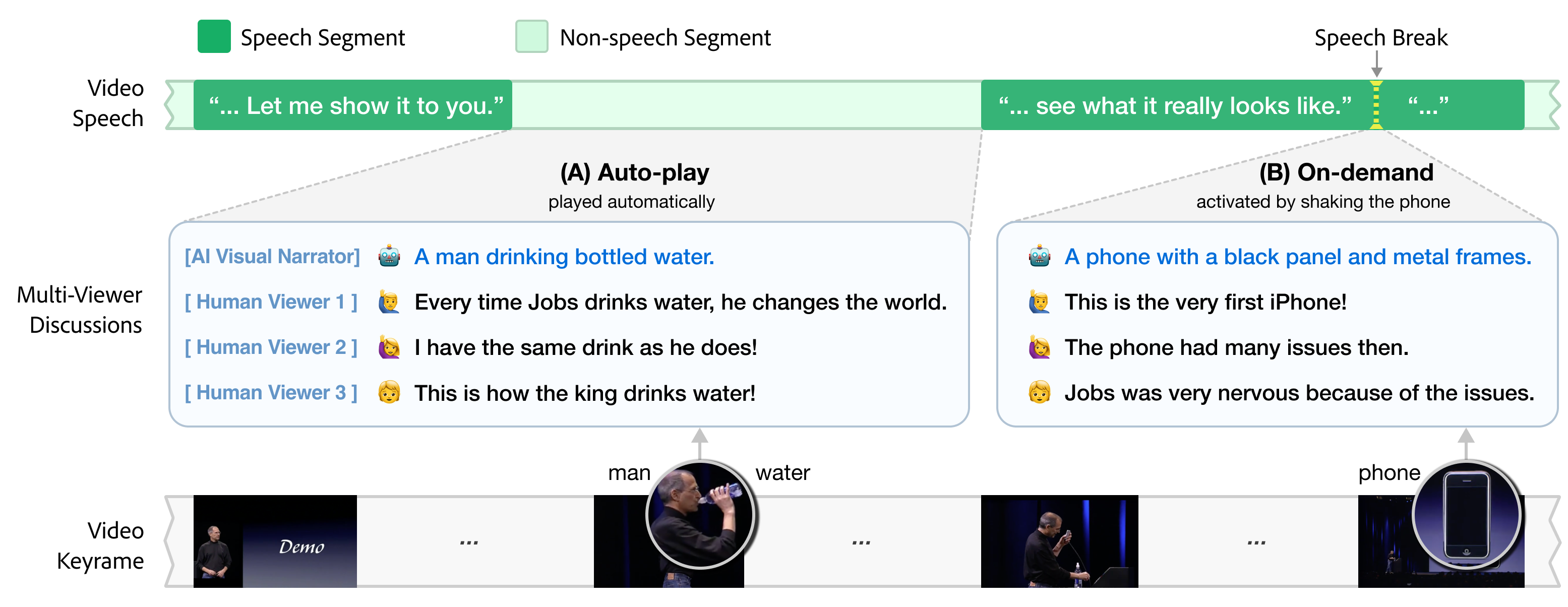

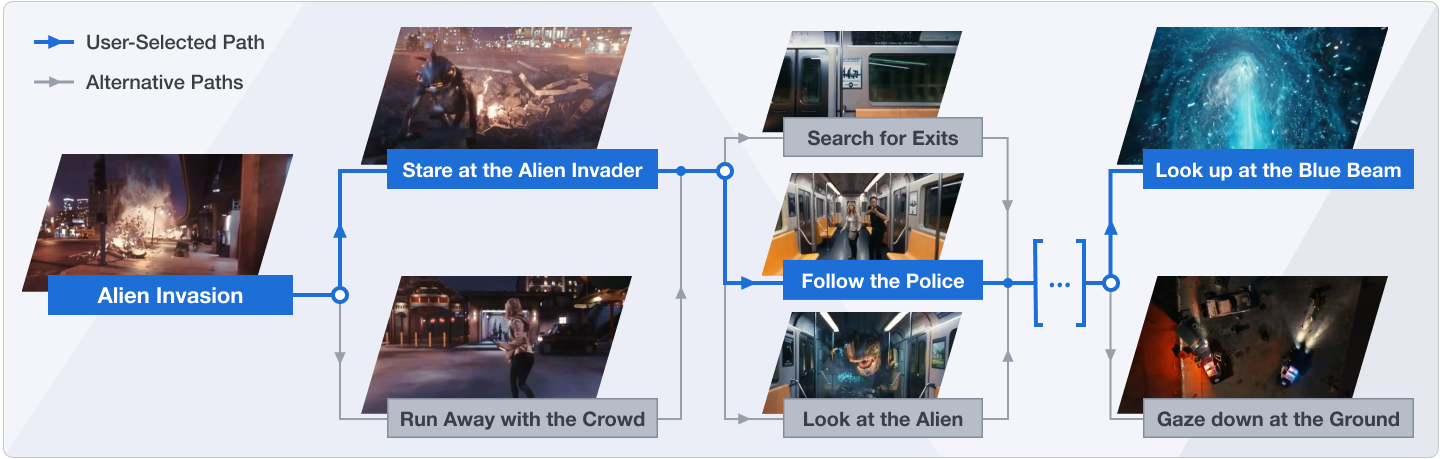

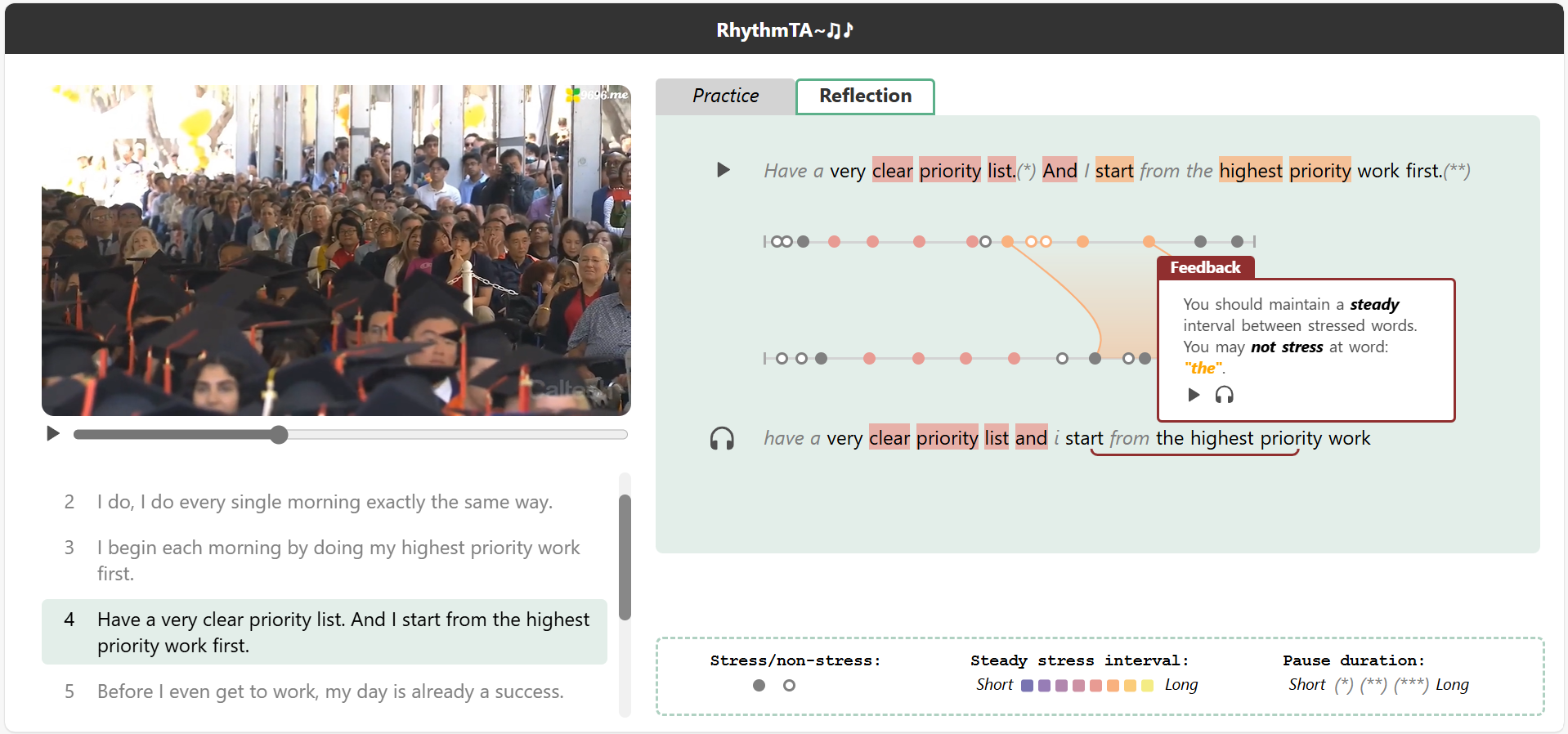

2. Multi-Modal AI Systems for Video Accessibility: I build machine learning pipelines that enable blind and low vision users to understand and engage with video content. Projects include making online video comments accessible (CHI 2025, best paper honorable mention), enabling interactive exploration for 360° videos (UIST 2025), and enhancing spatial awareness in film and television shows (Sonic Stage). These systems leverage multi-modal machine learning and expressive audio feedback to create immersive viewing experiences for blind viewers.

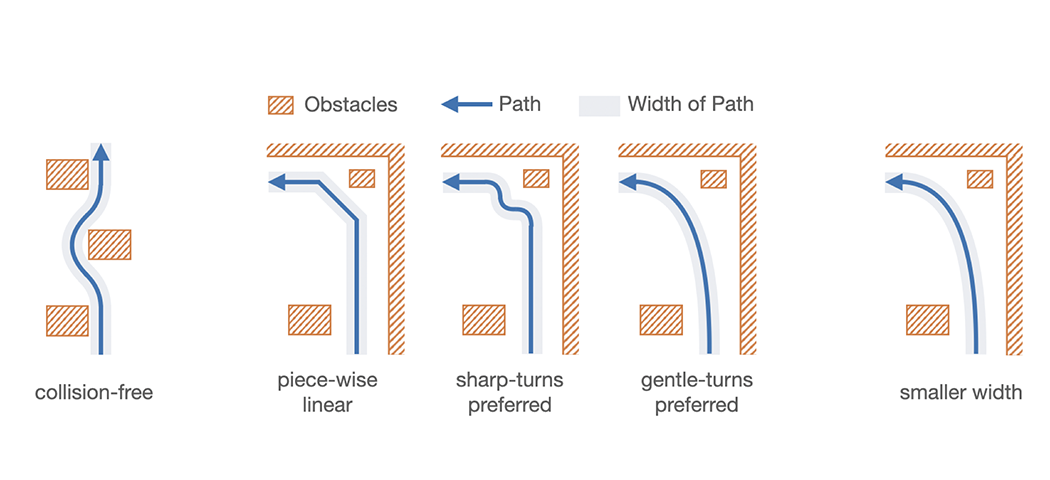

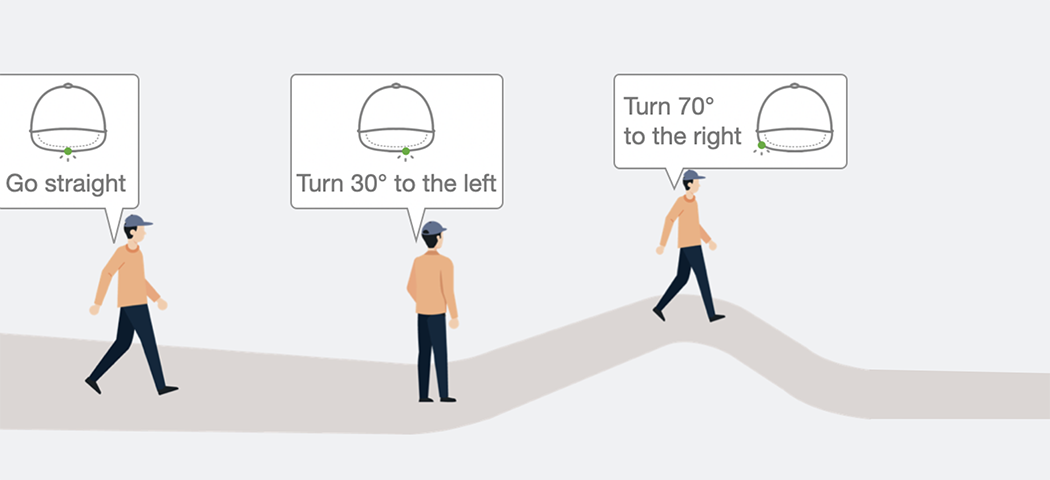

3. Multi-Sensory Interfaces for Outdoor Navigation: I develop wearable systems that support navigation for blind and low vision users through multi-sensory feedback, such as on-body haptics (IMWUT 2020), handheld vibrations (CHI 2021), and head-mounted light cues (IMWUT 2021). These systems provide real-time directional feedback to help users navigate independently and accurately.

Publications

-

CHI 25

CHI 2025, Best Paper Honorable Mention Award 🏅

CHI 25

CHI 2025, Best Paper Honorable Mention Award 🏅 -

UIST 25

Proceedings of the ACM Symposium on UIST, 2025

UIST 25

Proceedings of the ACM Symposium on UIST, 2025 -

UIST 25

UIST 2025, Best Paper Honorable Mention Award 🏅

UIST 25

UIST 2025, Best Paper Honorable Mention Award 🏅 -

CHI 26

Proceedings of the CHI Conference, 2026

CHI 26

Proceedings of the CHI Conference, 2026 -

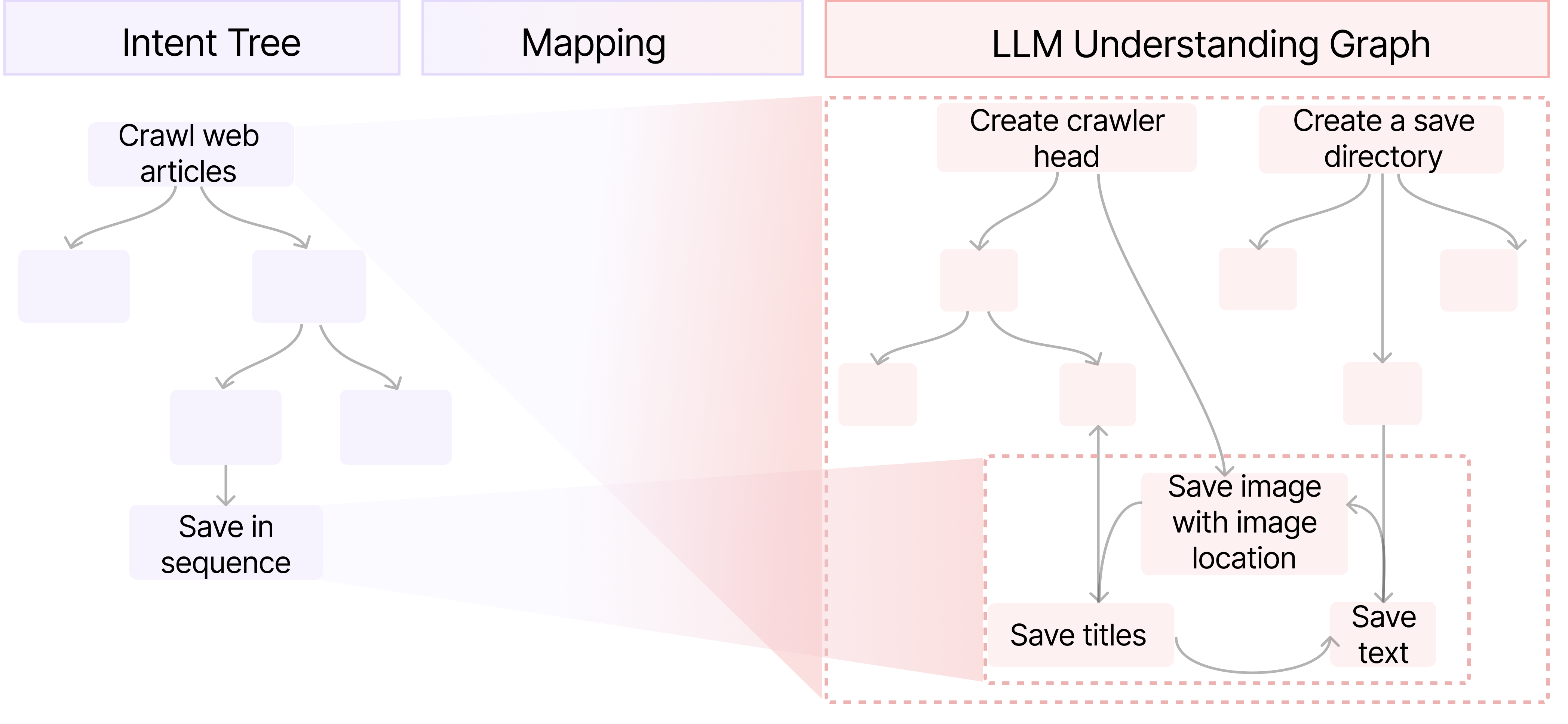

UIST 25

Proceedings of the ACM Symposium on UIST, 2025

UIST 25

Proceedings of the ACM Symposium on UIST, 2025 -

UIST 24

Proceedings of the ACM Symposium on UIST, 2024

UIST 24

Proceedings of the ACM Symposium on UIST, 2024 -

IMWUT 20

Proceedings of the ACM on IMWUT, 2020

IMWUT 20

Proceedings of the ACM on IMWUT, 2020 -

IMWUT 21

Proceedings of the ACM on IMWUT, 2021

IMWUT 21

Proceedings of the ACM on IMWUT, 2021 -

CHI 21

Proceedings of the CHI Conference, 2021

CHI 21

Proceedings of the CHI Conference, 2021 -

UIST 19

Proceedings of the ACM Symposium on UIST, 2019

UIST 19

Proceedings of the ACM Symposium on UIST, 2019 -

DAC 18

Proceedings of Design Automation Conference. 2018

DAC 18

Proceedings of Design Automation Conference. 2018

Game Projects

Beyond my research, I design indie games that engage players with real-world societal issues. These projects include:

• Educational Games

• Games Reflecting Society

• Games Beyond 2D Screen

• Rendering Techs

Awards

- [Jan. 2026] HKUST Global Research Award

- [Sep. 2025] Best Paper Honorable Mention Award at UIST 2025

- [Apr. 2025] Best Paper Honorable Mention Award at CHI 2025

- [Apr. 2024] Hong Kong Postgraduate Fellowship Scheme (HKPFS)

- [Jun. 2021] Outstanding Graduates in Computer Science and Technology, Tsinghua University

- [Oct. 2020] National Scholarship for Postgraduates

- [Oct. 2019] National Scholarship for Postgraduates

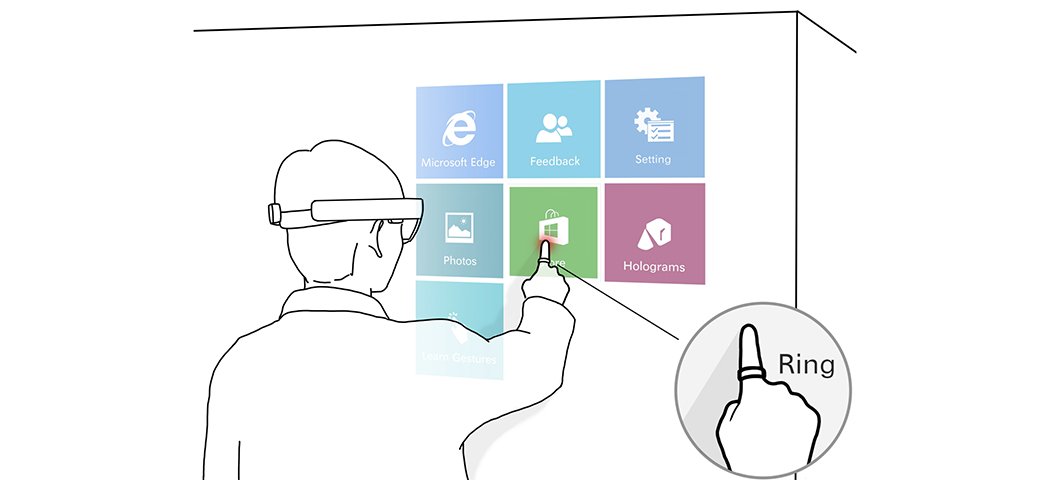

- [Dec. 2017] Microsoft Design Contest for Mixed Reality, 2nd place

- [Oct. 2017] National Scholarship for Undergraduates